NVIDIA annouced a new next-gen GPU superchip called Vera Rubin, which is scheduled to be released in the latter half of 2026. This GPU is named after a famous astronomer Vera Rubin, who discovered evidence of dark matter. It includes NVIDIA's first custom-designed CPU named Vera, which succeeds Grace, and a new GPU called Rubin, which succeeds Blackwell. Pairing with Vera CPU, Rubin offers 50 petaflops of FP4 inference performance, more than double the 20 petaflops of NVIDIA’s current Blackwell chips.

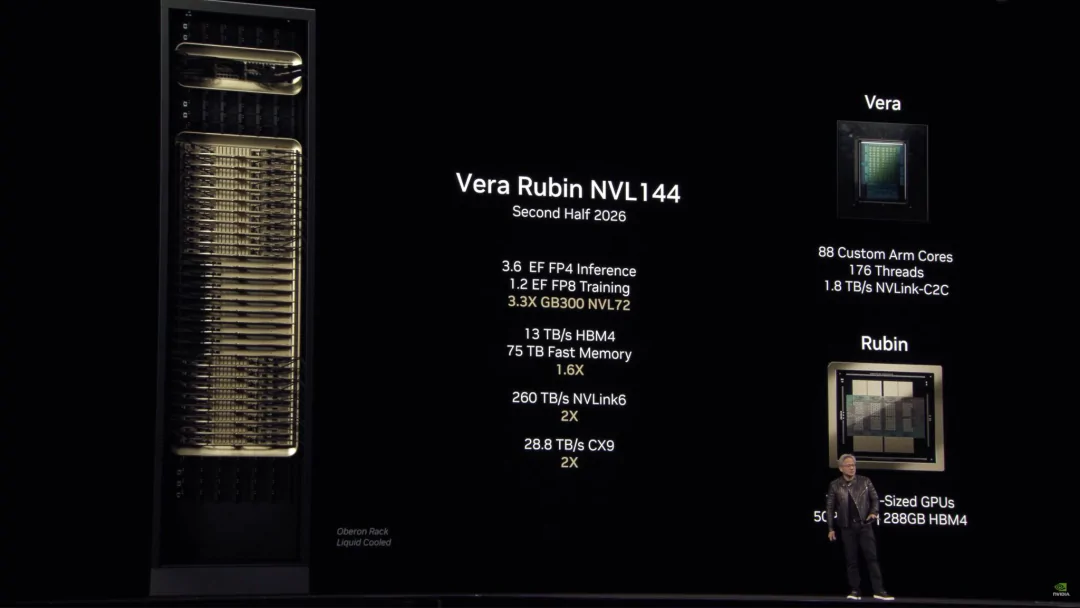

It is essentially a combination of two GPUs into one, integrating CPU together. When crammed in the new NVL144 rack system, the Vera Rubin platform will deliver approximately double processing performance of its predecessor Blackwell Ultra NVL72 for AI training and reasoning, 3.3 times higher inference performance compared to the GB300 with 4.2 times memory capacity and 2.4 times memory bandwidth of Grace.

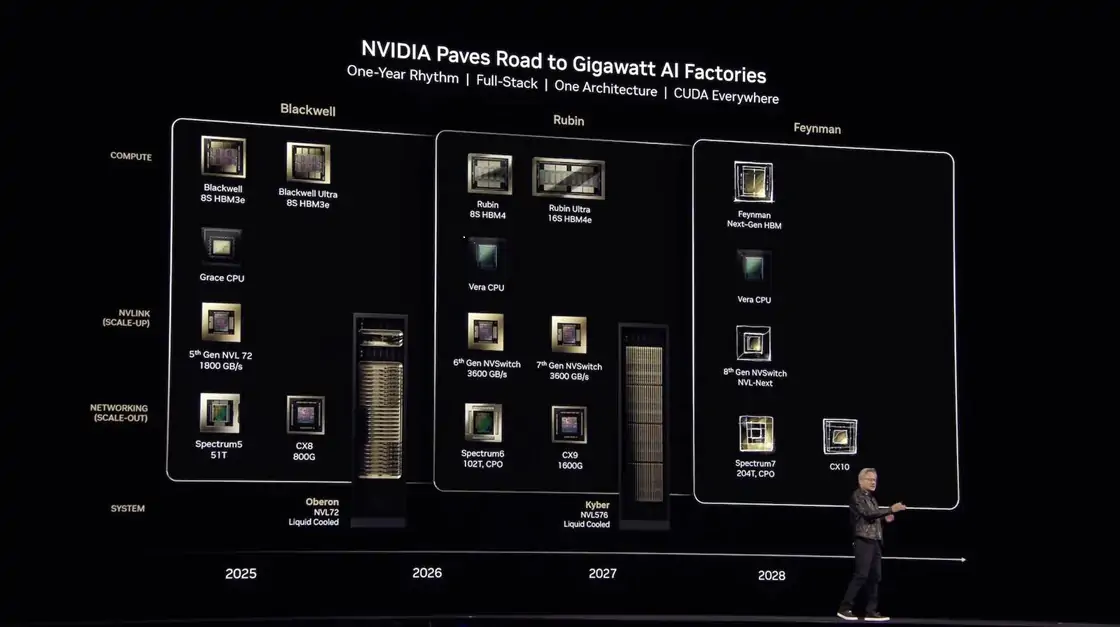

NVIDIA Upcoming GPU System Roadmap

NVIDIA continues to release new chip families on a yearly basis for meeting the growing demand of AI booming. NVIDIA Founder and CEO Jensen Huang shared the company's outlined CPU, GPU and system roadmap for the next three years in the GTC25 keynote.

Blackwell Ultra: The Successor of current Blackwell series, set to launch in the second half of 2025 with the same bandwidth of 8 TB/s but more memory capacity upgraded from 192GB to 288GB.

Vera Rubin: Next generation superchip following Blackwell Ultra is expected to arrive in late 2026, featuring 50 20 petaflops of AI performance.

Rubin Ultra: This new generation GPU will be unveiled in 2027 that integrates 4 GPUs together, with twice the performance at 100 petaflops of FP4.

Feynman: A new GPU named after a famous theoretical physicist - Richard Feynman, will come in 2028 which will include next-generation HBM memory paired with Vera CPUs.

NVIDIA is dedicated in AI tech innovation through its naming chip families after scientists as its tradition. NVIDIA's roadmap clearly demonstrates the acceleration in AI chip industry, which is significant for tech giants like Meta, Google, and OpenAI that rely on high-performance GPUs. As AI models continue to scale, these next-generation chips will become critical infrastructure to support the next generation of AI applications.

Vera Rubin Architecture - Intelligent Combination of Vera CPU with Rubin GPU

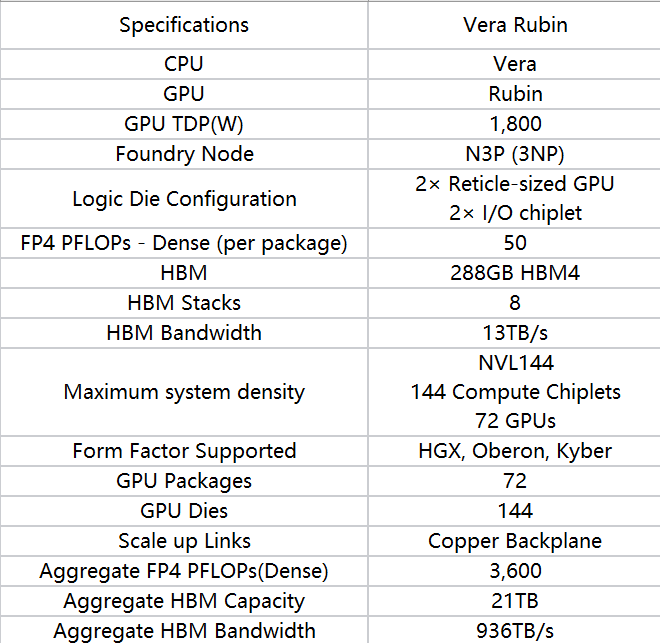

Vera Rubin is NVIDIA'S next major GPU architecture that consists of Vera CPU and Rubin GPU, which design matches the requirements of tight collaboration between CPUs and GPUs for future AI workloads, representing NVIDIA's next major technological leap.

Vera is NVIDIA's first Arm-compatible CPU architecture that adopts a fully self-developed core by NVIDIA and will have 88 custom cores and 176 threads, delivering twice the performance of the CPU used in last year's Grace Blackwell chips. The chip will feature integrated NVLink chip-to-chip connectivity for interfacing with NVIDIA's upcoming Rubin GPUs.

Rubin is acturally two GPUs, also coming with two reticle-limited dies. Fabricated with 3nm process and the custom Nvidia 3NP or the standard N3P, paired with Vera, Rubin can achieve 50 petaflops of FP4 performance with 288GB of new HBM4 memory (High Bandwidth Memory 4), which is 2.5 times faster than the 20 petaflops of its previous-generation Blackwell chips.

Shift from Single-die to Multi-Die GPU

NVIDIA is changing how we count GPUs. Traditionally, GPU counts are counted in terms of the number of packages, but starting with Rubin, GPU quantity will depend on the dies in a package. Blackwell's twin dies were a single logical die, while Rubin is actually a combination of two separate GPU dies, being counted as two GPUs on a package, not one.

This change in concept reflects a fundamental shift in GPU die design philosophy. As the physical limits of a single piece of silicon approach, chip designers are turning to multi-die designs to achieve more powerful computing by combining multiple smaller chipsets together.

NVIDIA has already announced that Rubin Next, scheduled for release in the second half of 2027, will combine four dies into a single die that is twice as fast as Rubin. This design will be called four GPUs instead of one.

The advantage of this multi-die design is that it bypasses the physical limitations of a single piece of silicon, enabling higher compute density and better energy efficiency. The design also improves yields and reduces costs as each small chip can be manufactured and tested independently.

Rack Revolution from NVL72 to NVL144

Like Blackwell and Blackwell Ultra, Vera Rubin will be available in a large server rack system. Vera CPU cores and Rubin GPUs will be packaged as a superchip and configured in a rackscale called NVL144. This system consisting of a total of 144 GPUs will deliver 3.6 exaflops of FP4 inference compute, 3.3 times faster than Blackwell Ultra NVL72's 1.1 exaflops in a similar rack configuration. The system will also feature NVIDIA's 6th-gen NVLink interconnect, with an aggregate data transfer speeds of 260 TB/s (1.8 TB/s per die).

At the data center level, Vera Rubin also brings transformative changes. NVL144 rack doubles the quantity of GPUs compared to the previous NVL72 rack, which dramatically increases the compute density per unit space, enabling exponential growth in AI processing performance in data centers. It would be a major boon for cloud service providers and large-scale AI research institutions that they can deploy more computing resources in the same physical space, reducing infrastructure costs and improving operational efficiency.

Higher compute density also results in higher density of energy consumption. To address this challenge, Vera Rubin system adopts multiple creativie technologies including advanced cooling solutions and more efficient power delivery systems to ensure performance gains alongside energy efficiency enhancement.

900-fold Performance Boost

The Rubin architecture delivers a staggering 900x performance leap compared to the Hopper architecture, which enabling training larger models, tackling more complex problems, and creating more intelligent systems. Such monumental breakthrough is achived by the following improvements.

Innovation in Architecture

Vera Rubin introduces an entirely new compute unit design, significantly enhancing the efficiency of each unit. Additionally, the internal data flow pathways have been optimized, reducing transmission bottlenecks.

Advancements in Fabrication Technology

Although NVIDIA has yet to reveal the exact manufacturing process of Vera Rubin, it is likely to utilize a more advanced node than Blackwell—potentially TSMC’s 2nm process or even more advanced technology. A more refined process translates to smaller transistors, higher transistor density, and lower power consumption.

Improvements in Memory Bandwidth

AI workloads demand not only immense computational power but also high-speed memory access. Vera Rubin features major enhancements in its memory subsystem, delivering higher bandwidth and lower latency to maximize the potential of its computational units.

Optimizations in Full-stack Solution

Hardware improvements require corresponding software enhancements to fully unleash their potential. NVIDIA’s CUDA ecosystem and deep learning libraries will be specifically optimized for the Vera Rubin architecture, ensuring that every ounce of computational power is effectively utilized.

From hardware (GPUs, CPUs) to software (CUDA, deep learning libraries) and application frameworks (such as Omniverse), NVIDIA provides a comprehensive full-stack AI solution. This end-to-end capability makes it easier for customers to adopt and deploy AI technologies, reducing technical barriers and integration costs.

Broad Application

Vera Rubin's applications will span an exceptionally broad range, from cloud data centers to edge devices, all while leveraging its powerful performance advantages across all scenarios.

Cloud Data Centers

Vera Rubin will become the preferred platform for training and deploying large-scale AI models. Its high performance and energy efficiency make it particularly suitable for building AI supercomputers, providing immense computational power for research institutions, internet companies, and enterprise clients.

Autonomous Driving

Vera Rubin’s powerful inference capabilities will enhance real-time environmental perception and decision-making efficiency. Autonomous vehicles must process massive amounts of sensor data within milliseconds to make safe driving decisions, demanding exceptional computing power. Vera Rubin’s introduction will accelerate the commercialization of Level 4 and Level 5 autonomous driving technologies.

Healthcare Industry

Vera Rubin will also drive advancements in precision medicine and personalized treatment. By analyzing genomic data, medical imaging, and electronic health records, AI can assist doctors in making more accurate diagnoses and treatment decisions. Vera Rubin’s high performance will enable faster and more precise medical analyses.

Scientific Research

The introduction of Vera Rubin will further enhance scientific research, potentially leading to even more scientific breakthroughs. Vera Rubin will expedite complex computational tasks, from climate modeling to drug discovery. For example, DeepMind’s AlphaFold has made groundbreaking progress in protein folding prediction.

Conclusion

Vera Rubin platform will bring continuous increasing in AI computing power and keep NVIDIA ahead in the high-end AI chip market with technological expertise and ecosystem advantages, although competitors like AMD and Intel are actively expanding their AI chip portfolios. It will accelerate the adoption and innovation of AI applications. More powerful computing capabilities enable more complex AI models, higher accuracy, and faster response times. This breakthrough will make many AI applications—previously limited by compute resources—feasible, driving new business models and market opportunities.

To fully harness Vera Rubin’s performance advantages, data centers will need to enhance their power supply, cooling systems, and networking infrastructure. This trend will stimulate growth across the entire data center supply chain, creating significant market deman, and driving data center infrastructure upgrades.

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module- 1NADDOD 1.6T XDR Infiniband Module: Proven Compatibility with NVIDIA Quantum-X800 Switch

- 2OFC 2025 Recap: Key Innovations Driving Optical Networking Forward

- 3NVIDIA GB300 Deep Dive: Performance Breakthroughs vs GB200, Liquid Cooling Innovations, and Copper Interconnect Advancements.

- 4Introduction to Open-source SONiC: A Cost-Efficient and Flexible Choice for Data Center Switching

- 5Blackwell Ultra - Powering the AI Reasoning Revolution